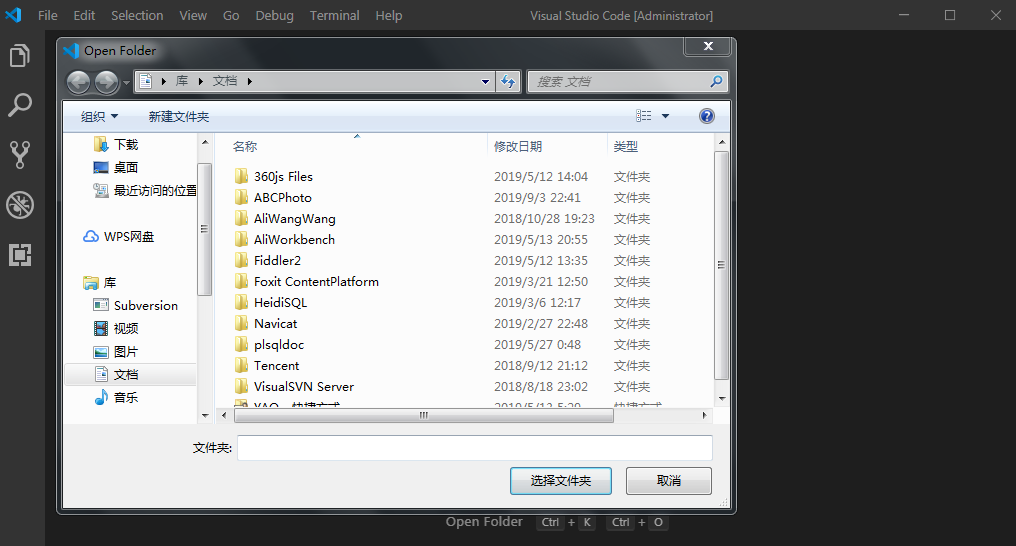

Of course, for some serious web scraping, I recommend using PhantomJS. This is because, in NodeJS, everything is asynchronous, so we need to pass the url and file from the loop to a separate function:Īnother good nodeJS module for doing curl like web scraping is curlrequest. Then I did a for-loop to iterate through the array and inside the loop, I put the request function, passing the file and url parameters, and some console.log() calls to print what’s happening:Īs you can see from the output, only the second url and file was scraped. First, I added the request and filesystem module to my script plus the array of URLs I want to scrape. Looking around, I found a great nodeJS module called request which is a small abstraction on top of http.request and makes writing my script much easier. But, I want to write the response to a file and do some looping so that I can use an array of URLs instead of just making a request to one URL. When parsed, a URL object is returned containing properties for each of these. Well, looking at the nodeJS API, it’s clear that HTTP.request gives you something that looks like a Curl equivalent. A URL string is a structured string containing multiple meaningful components. There are 2949 other projects in the npm registry using url-join. Start using url-join in your project by running npm i url-join. Learn more about working with URLs and building your own web server application with Node.js with The Node Beginner Book - the first part of this extensive Node.If you are familiar with Curl, the command line tool for transferring data with URL syntax, you might wonder how can that be done with NodeJS. Latest version: 5.0.0, last published: 4 months ago. The URL object representation of a URL can also be used to change those parts: var URL = require('url').URL Node.js has a built-in module called HTTP, which allows Node.js to transfer data over the Hyper Text Transfer Protocol (HTTP). │ origin │ │ origin │ pathname │ search │ hash │ │ protocol │ │ name │ word │ host │ │ │ │ prints 'Here is an overview of all the different parts of an URL and how the according URL object attribute is named: " https: // user : pass : 80 /p/a/t/h ? query=string #hash " The new API is based on the URL object, which makes the different parts of a URL available as object attributes: var URL = require('url').URL  The url module now provides an additional implementation which implements the standardized WHATWG URL API, making the url-parsing code of Node.js work identical to the way that web browsers are parsing URLs. You have already been able to parse URLs in Node.js before, via the url module, but this provided a very Node.js specific implementation which is now considered legacy. A dedicated library makes this very easy. While this can be achieved by working with the URL as a simple string, splitting this string into its different logical parts manually with substring operations or regular expressions is very cumbersome. When writing Node.js web server software, you regularly need to access or even manipulate those different parts. Here’s what you can use it for.Ī URL like consists of several different parts - e.g., the host part ( or the search ( ?bar=1, often called query string).  First, import all of the necessary packages, like so. We are going to use the Express router here. Create a folder called routes in the root directory and a file named urls.js inside of it. The querystring.stringify() method produces a URL query string from a given obj by iterating through the object's 'own properties'. The recently released version 8.0.0 of Node.js made the experimental implementation of the WHATWG URL parser from Node.js v7.0.0 non-experimental and fully supported. The URL route will create a short URL from the original URL and store it inside the database. encodeURIComponent The function to use when converting URL-unsafe characters to percent-encoding in the query string.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed